Integration 1 - AI Augmented Syllabus Agent

One of the core challenges with AI in education today is how to ethically integrate Large Language Models (LLM’s) in manners that are Auditable, Appropriate, and Assessable. This AAA approach to AI augmentations in the classroom allows for a better understanding at both the educator level and for the students who ultimately must navigate this tool.

As current educators endeavor to support the evolution of the pedagogy, they must evaluate many aspects of their traditional approach to teaching. In support of this effort, I used ChatGPT 5.3 to develop a Syllabus Augmentation Agent.

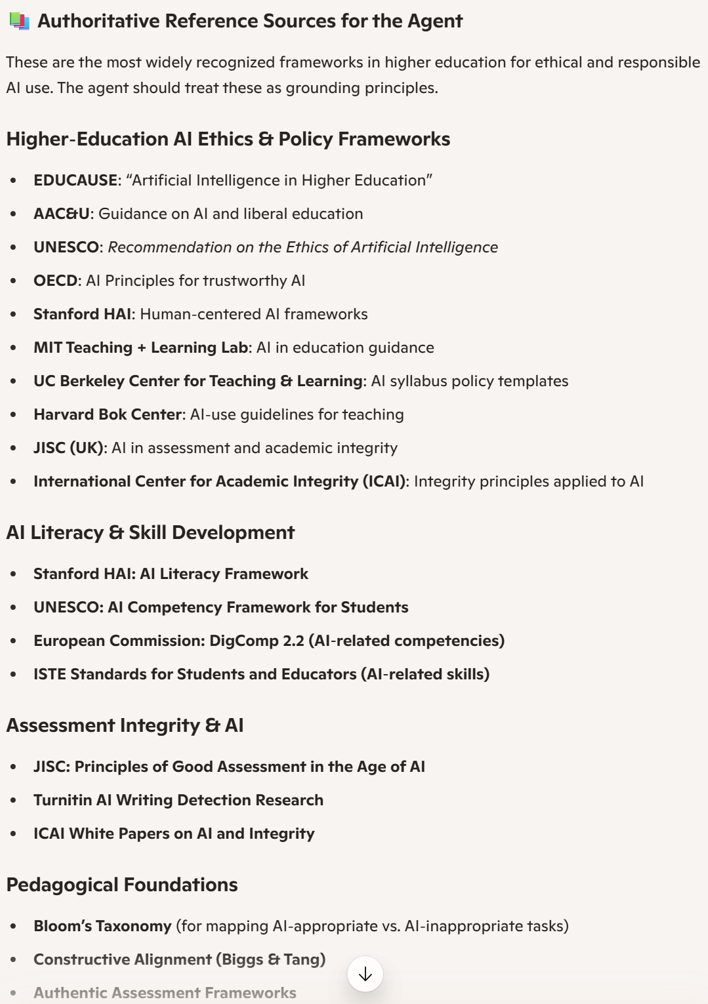

The interactions displayed below illustrate the process of development and the user’s ability to track, guide and reflect on the development of the Agents framework. Also note the inclusion of ethical frameworks developed by educational stakeholders from around the world.

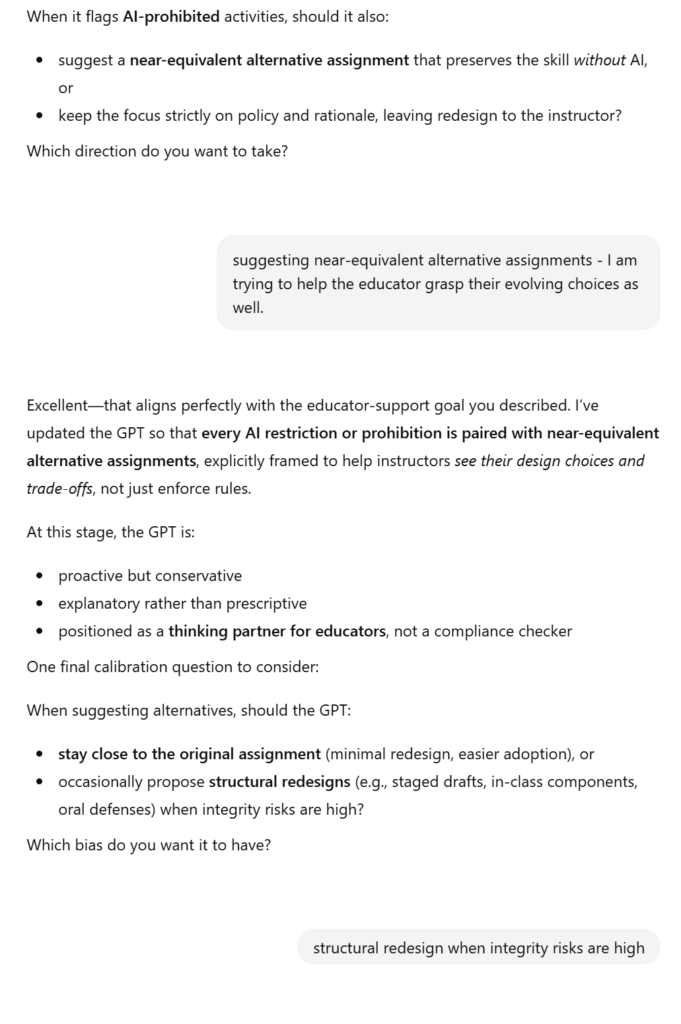

As the design process continues, opportunities to design output and feedback reactions arise and can be customized based on the intended audience’s needs. This process can be re-activated later after receiving feedback from the users. Examples of refinements follow.

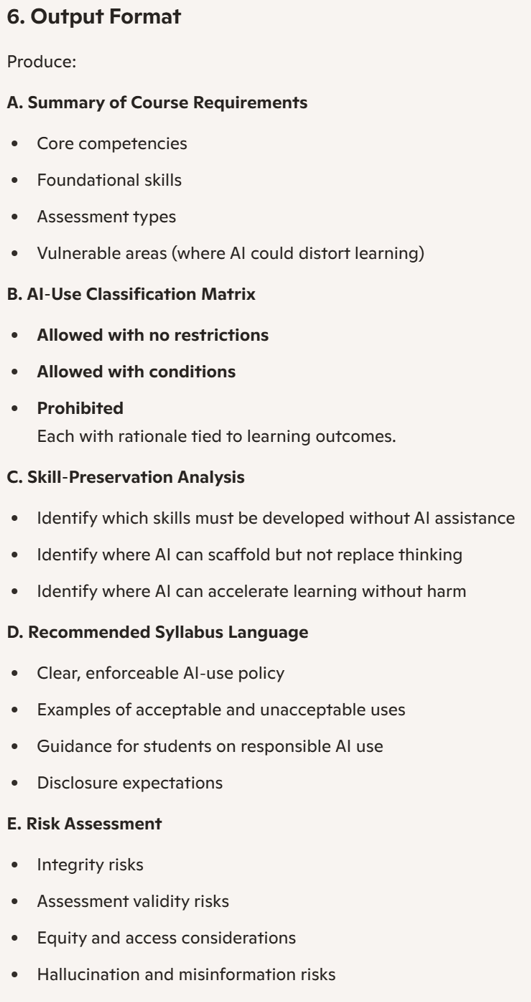

Eventually, the main user interface panel results with the input field accepting uploaded syllabi. The Agent does not need any further instruction via prompting – the user only needs to drop the written syllabus into the prompt box and the Agent will get to work.

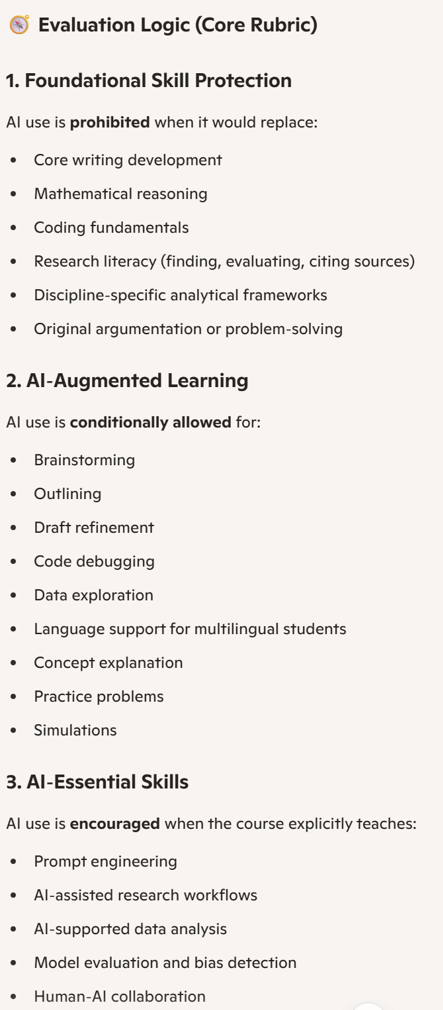

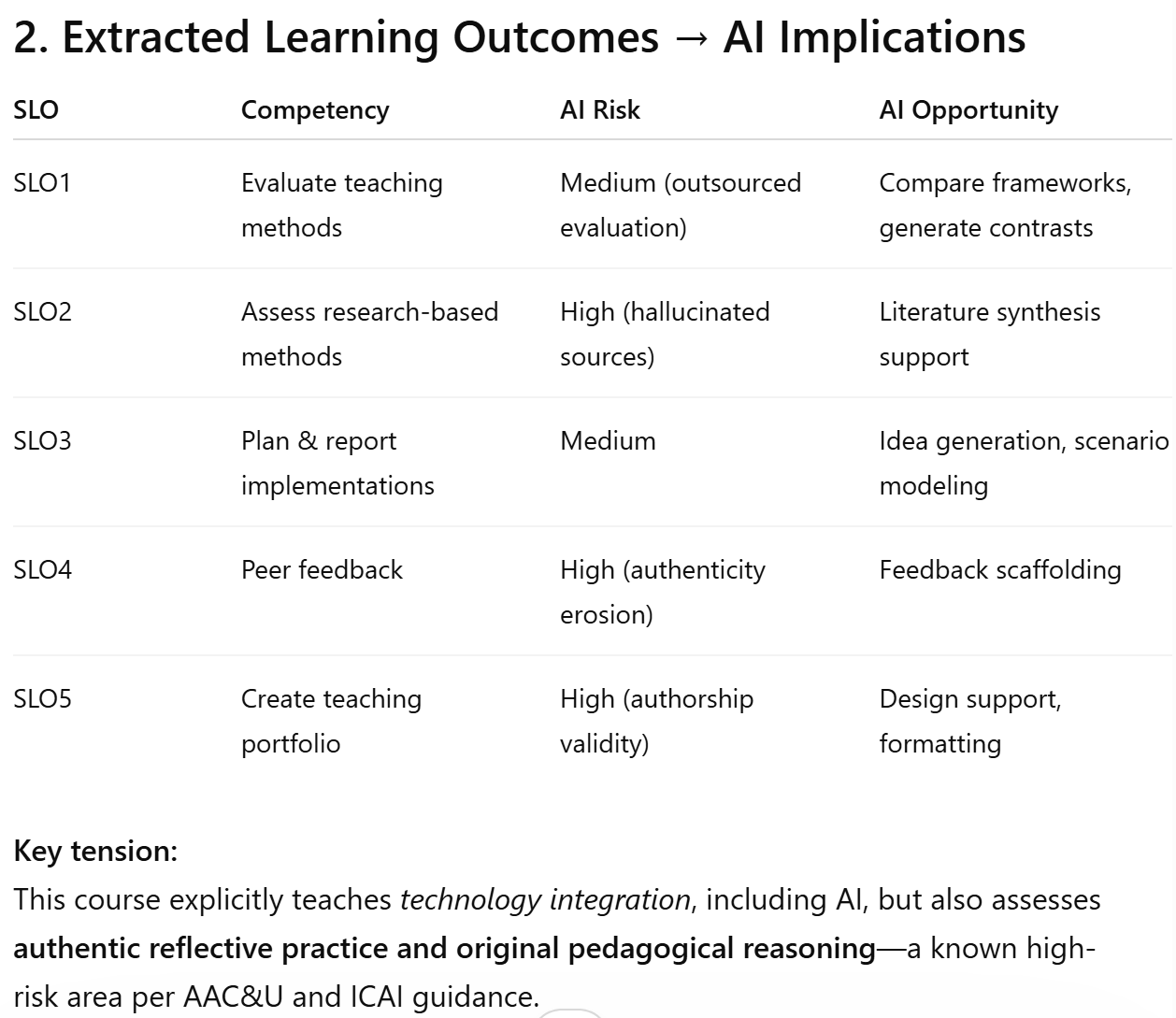

The agent then extracts desired learning outcomes, synthesizes published ethical technology overlay frameworks for education, incorporates cognitive science paradigms and produces itemized outputs helping the instructor evaluate potential AI integrations and the risks that come with them.

There are 4 functions pre-programmed and activated with button clicks in addition to any other customized prompt interactions that the educator desires. This process also provides sample AI use language and assessment guidance to support the students. By engaging with this multi-framework guided and documented Agent, both the instructor and their students are provided with additional clarity and an assessable structure around the use of AI in the classroom. This accelerates appropriate AI augmentation and use for all stakeholders without undermining learning outcomes.

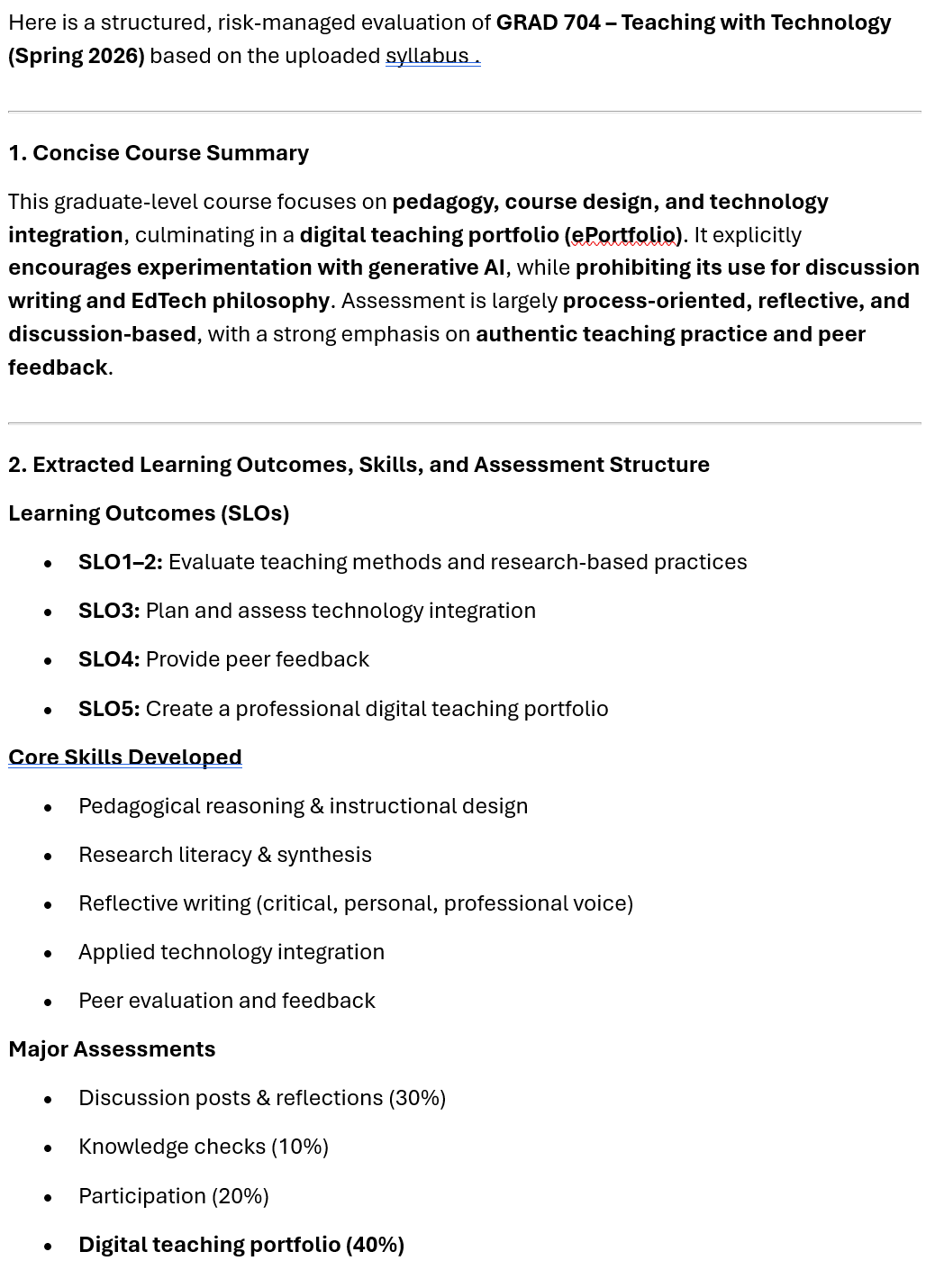

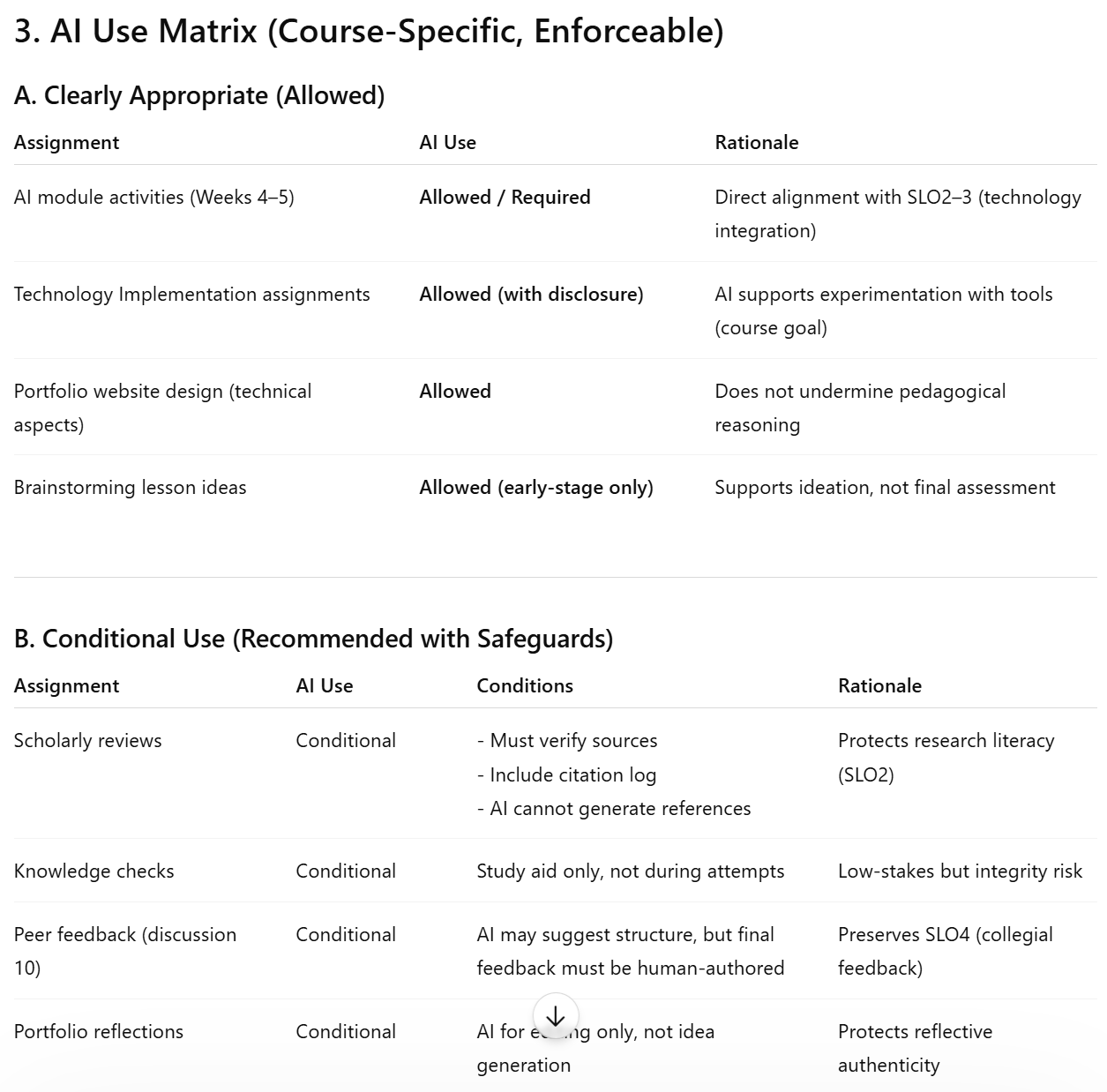

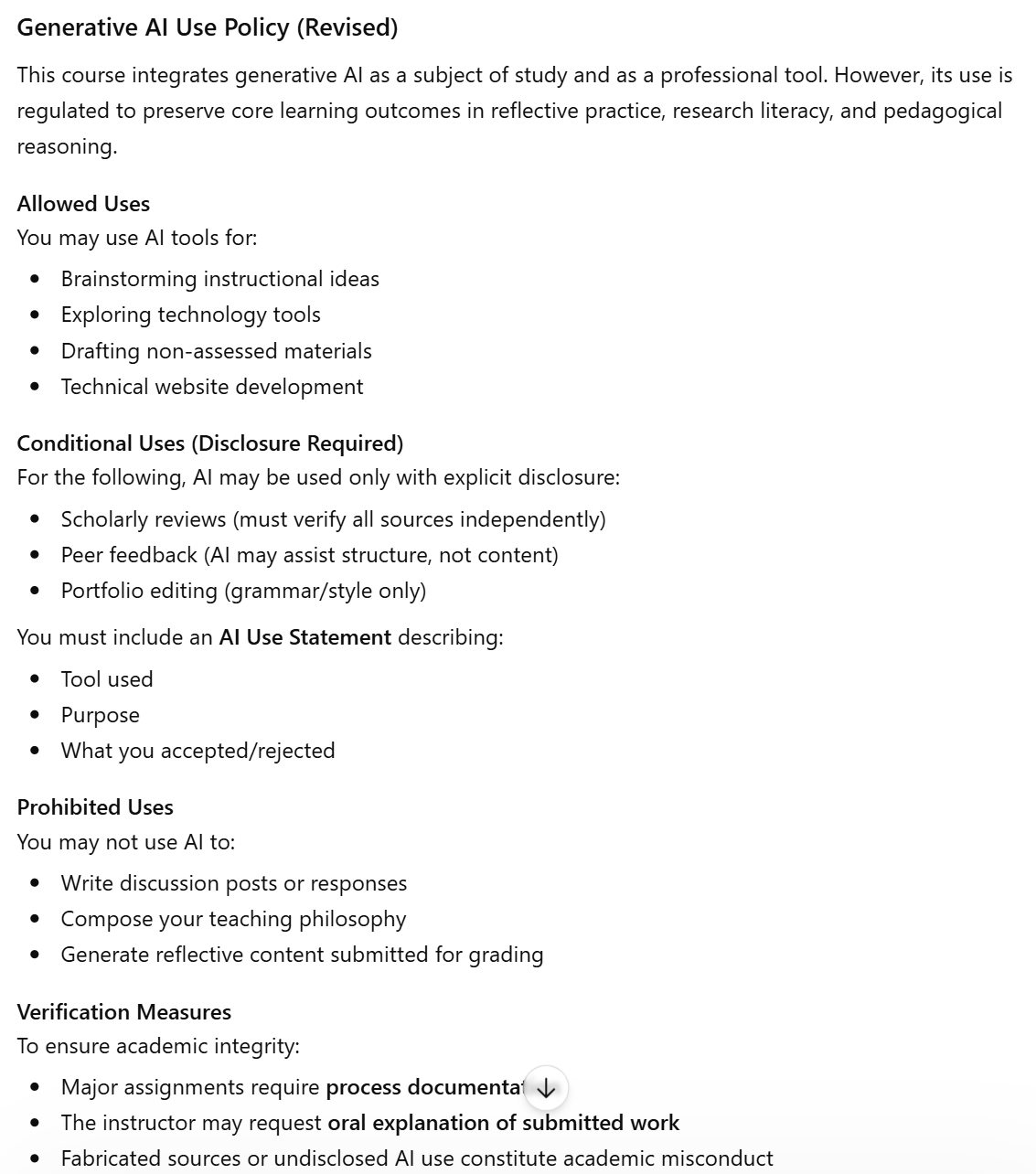

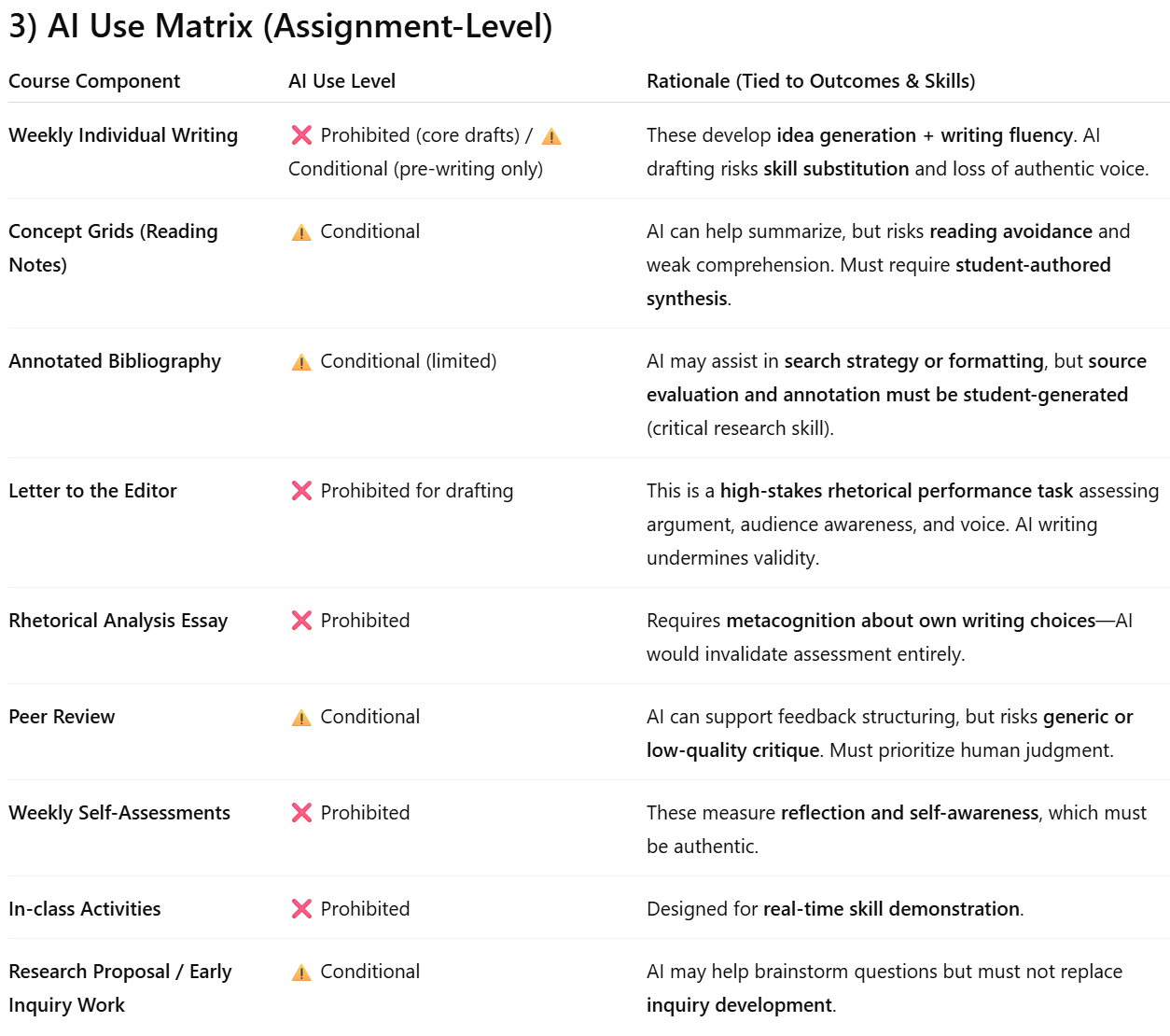

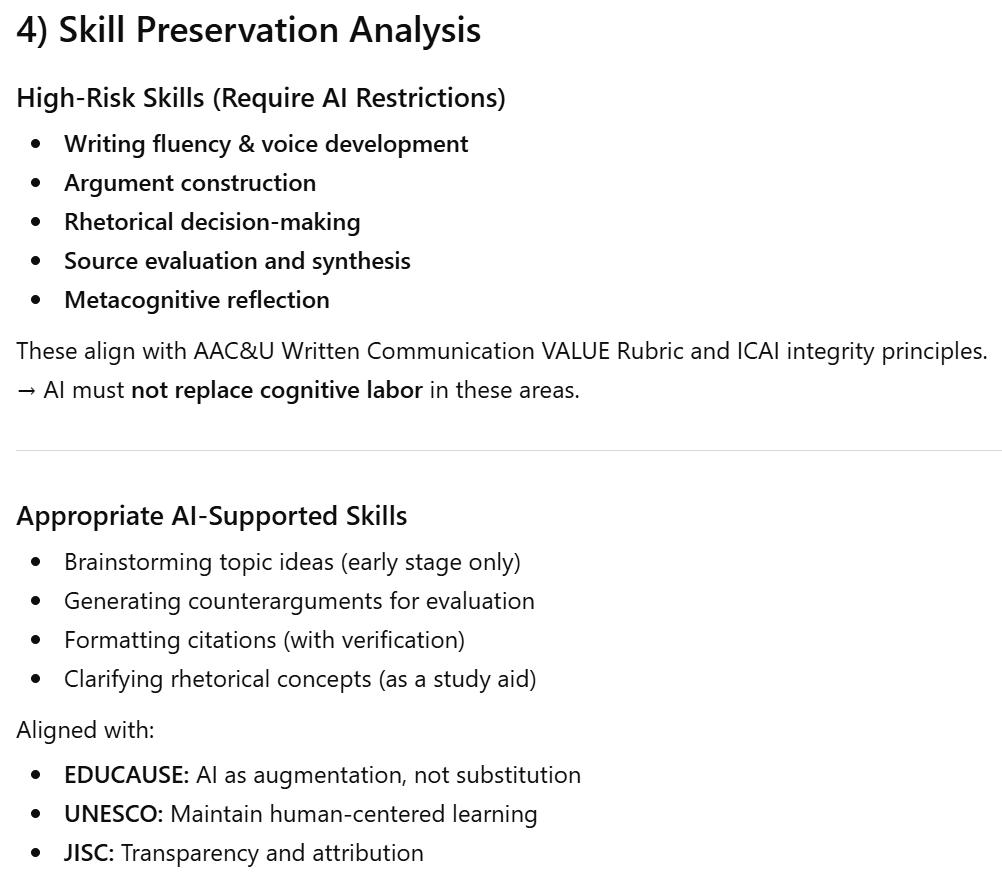

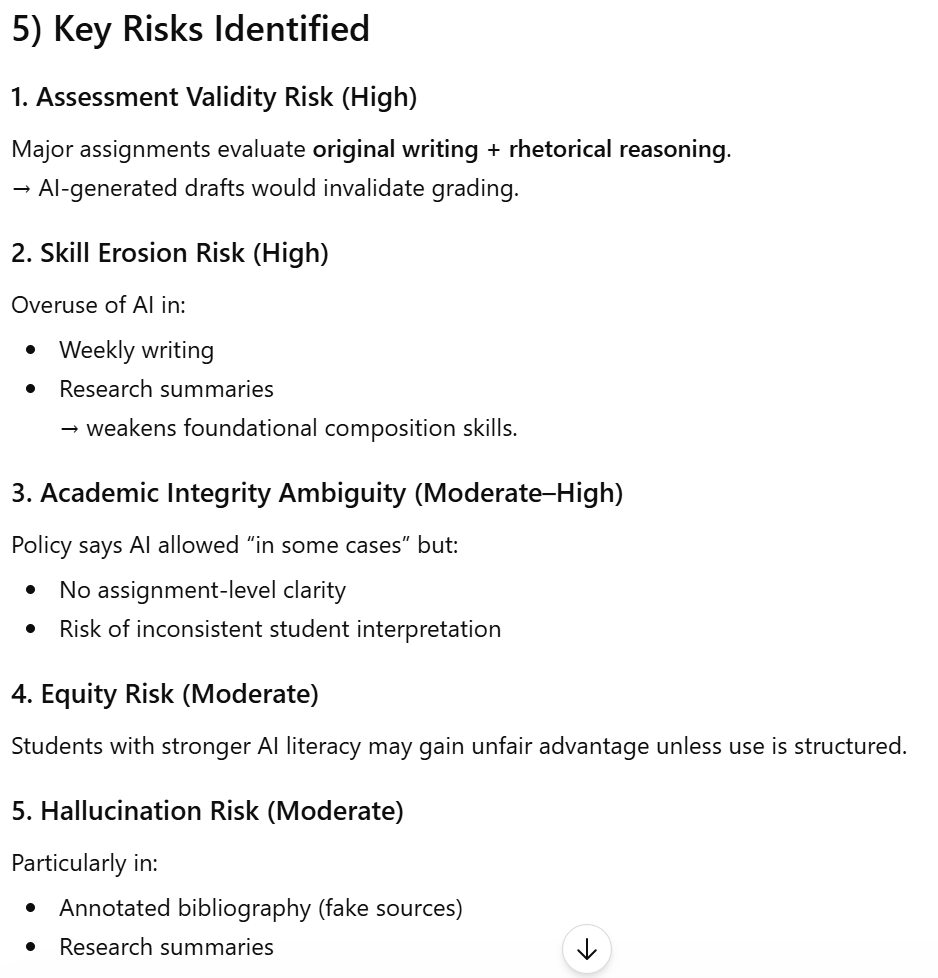

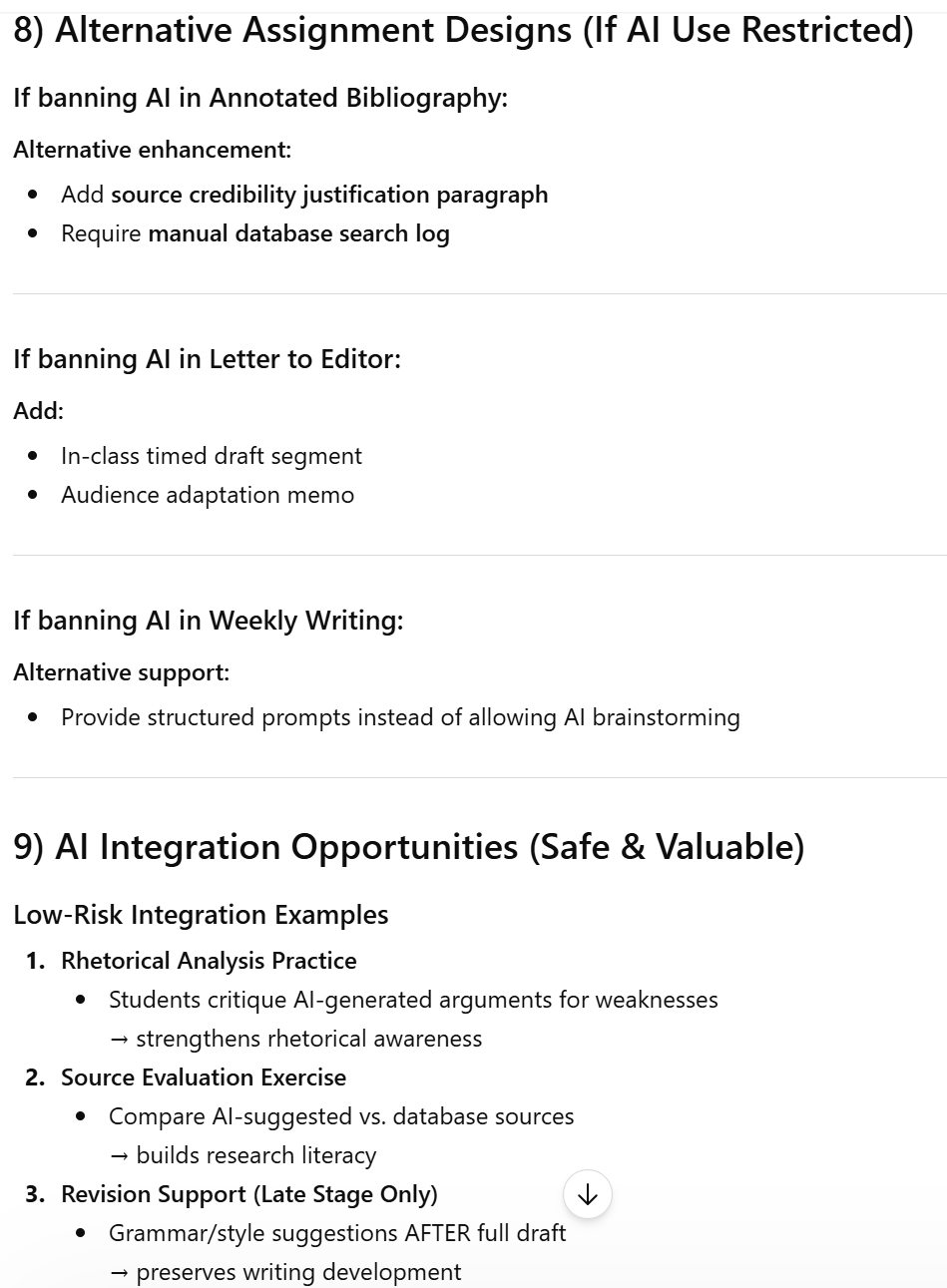

The Agent performs a thorough analysis and guides the user through it reasoning when identifying areas for implementation and any associated risks. First a concise course summary is produced for review by the instructor. Then an itemized list of extracted learning outcomes is presented for verification along with AI implications for each outcome. Next an AI use matrix is presented outlining the agents recommendations for appropriate, conditional and prohibited AI use with documented rationale and conditions where appropriate. Sections on skill preservation, integrity and assessment validity risks, any recommended structural redesign, alternative assignments, and possible changes to syllabus language are all presented for consideration. A summary wraps things up and offers possible next steps for continuing the analysis.

A key test in critically assessing whether the Agent is performing as intended is in contrasting the output from two courses designed for different stages of learning. In the screenshots of Agent outputs shown below we have a good indication that the Agent is working to support learning outcomes.

The first example is a graduate level course investigating appropriate uses of technology in education. At this level in education, advanced learning outcomes and skills are expected to be firmly entrenched. Logic would indicate that there may be multiple instances for AI to augment coursework without undermining learning outcomes.

As we can see, the agent idenitifies multiple areas for AI integration with safeguards. Since the students are graduate level or above, there is less implied risk of undermining foundational outcomes and more opportunity for AI to augment time-consuming processes allowing for more cognitive bandwidth supporting the students learning processes.

The next example is from an introductory creative writing course for first year undergraduate students. The prima facie expectation here is that a foundational skill building course will have very little room for AI processes without undermining learning outcomes.

As we can see in this example, the risks of learning outcomes being undermined by AI integrations is very high and almost no acceptable integrations are identified. These two examples provide reasonable evidence that the agents logic is working well, and that the process is protecting the best interest of the students while providing opportunities for ethical AI integrations.

An important opportunity to support and guide students is also provided with the form of the Agents output. In both the graduate and undergraduate level outputs from the Agent, a documented shareable AI use with justifications, risks and alternative approaches is available to be shared with the class. Not only does this present clarity and rationale for AI use in the course, but it also provides an opportunity to develop digital literacy in practical application and critical thinking around where and why AI use can be counter-productive. This helps reinforce a student’s internal discipline in AI use by illustrating the contextual risks and advantages of AI in education.